HTTP

The HyperText Transfer Protocol (HTTP) is how browsers communicate to web servers.

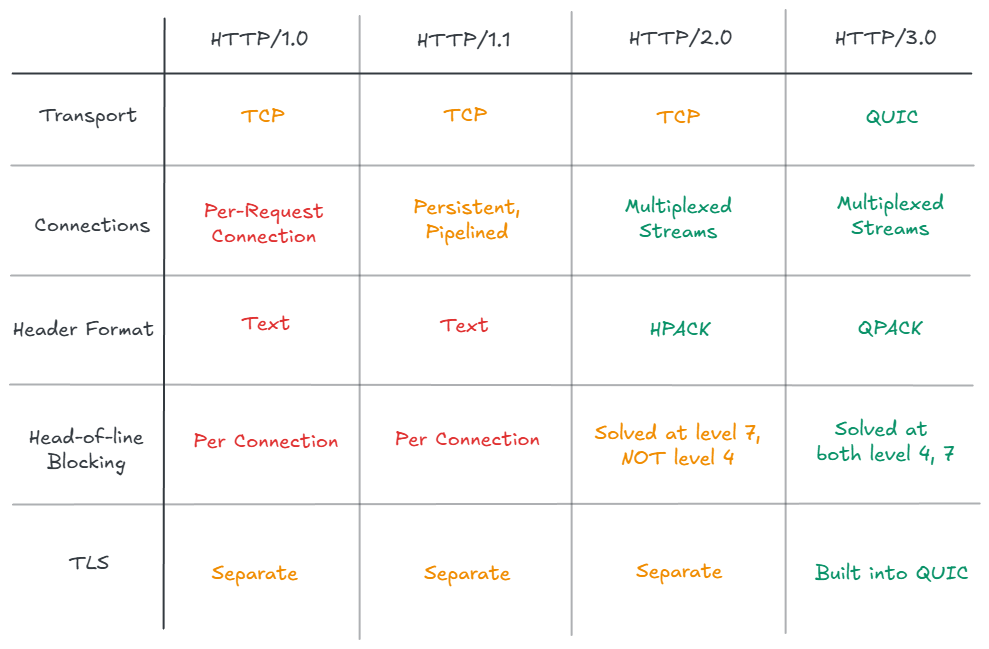

- HTTP is an application-layer protocol (Layer 7) that runs on top of a transport protocol (TCP, or QUIC in HTTP/3)

- It follows a request-response model: A client sends a request, the server sends back a response.

HTTP has evolved significantly since inception. The goal of this blog post is to give a history of the evolution and describe the features and limitations of each version.

Early HTTP Versions

HTTP/0.9 was introduced by Tim Berners-Lee as part of the World Wide Web. It only supported GET requests and did not have headers or status codes (200, 404, 500, etc.). These were introduced in HTTP/1.0 along with POST and HEAD.

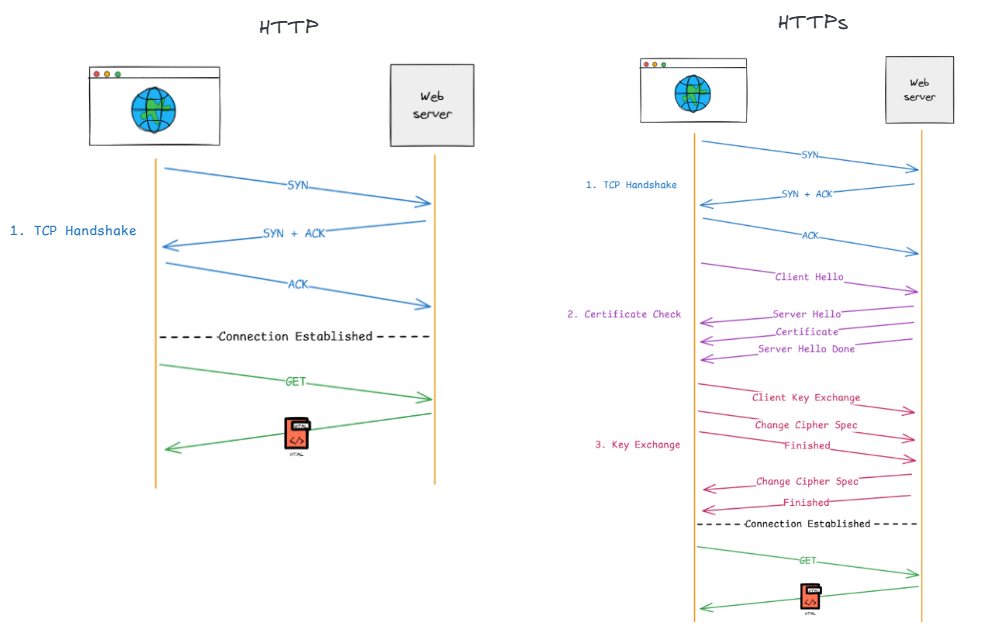

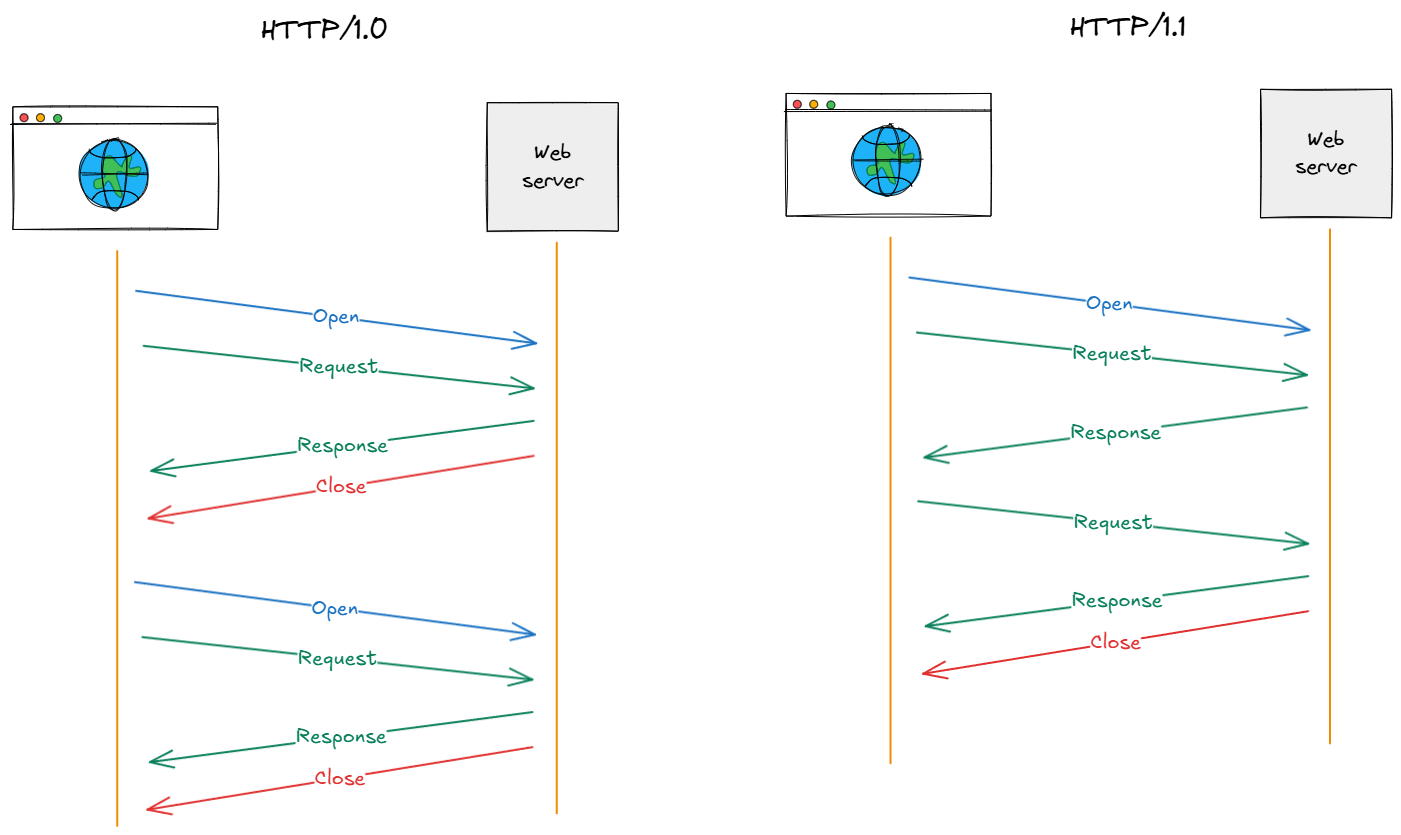

The original versions of HTTP did not support persistent connections. This meant that every request would require a new connection, which comes with additional overhead.

In HTTP/1.0, a connection was required for every resource. This means every image, CSS file, or JavaScript file required a new connection (and corresponding handshakes).

HTTP/1.1 (1997)

HTTP/1.1 addressed many of the initial problems with HTTP/1.0, and is still widely used for simple websites.

Improvements

- Persistent Connections: Instead of closing the connection after a single response, the server keeps the TCP socket open. This allows the client to send multiple requests through the same pipe without the overhead of repeated handshakes.

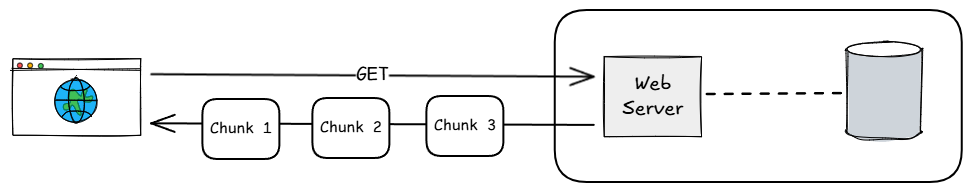

- Chunked transfer encoding: Servers could send results in smaller chunks. This made page rendering faster, since the server didn’t have to wait for the whole response to be ready before sending chunks.

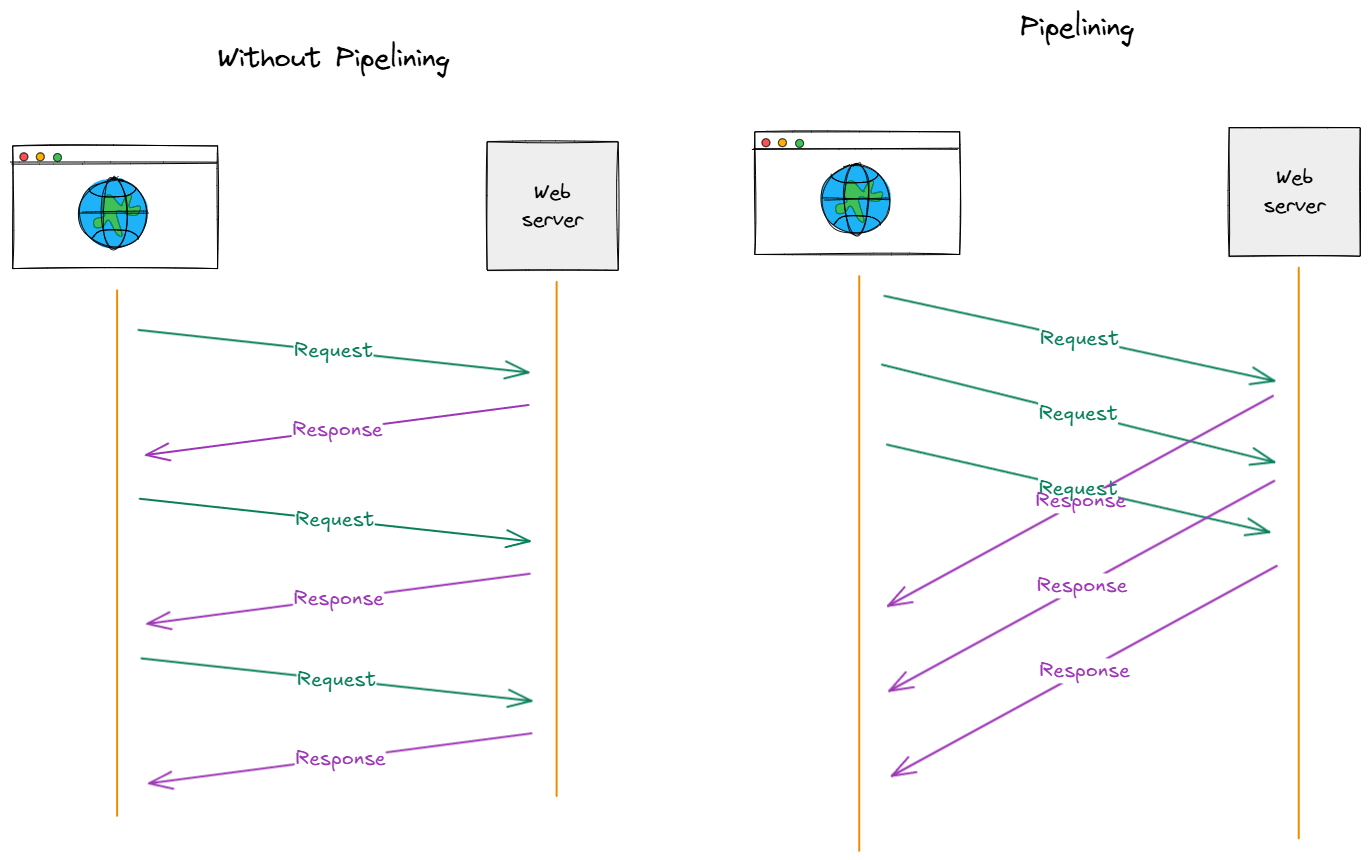

- Pipelining: Instead of waiting for a response before sending the next request, the client could send multiple requests at once.

Head-of-Line Blocking

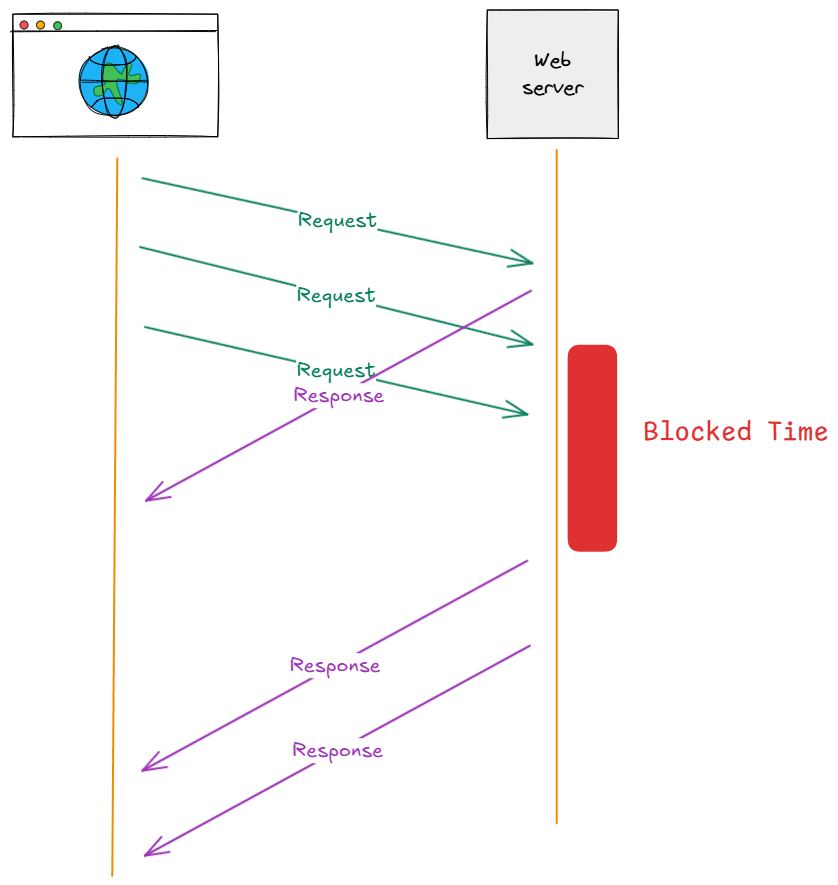

Pipelining failed in practice due to head-of-line blocking.

- The server must send responses in the same order that they were received.

- If the first result gets stuck, all subsequent requests are also blocked, even if they are ready to be sent.

Developers got around this using a few methods:

- Domain sharding: Serve static assets from subdomains (with separate connections).

- Make fewer requests by bundling assets together.

HTTP/2 (2015)

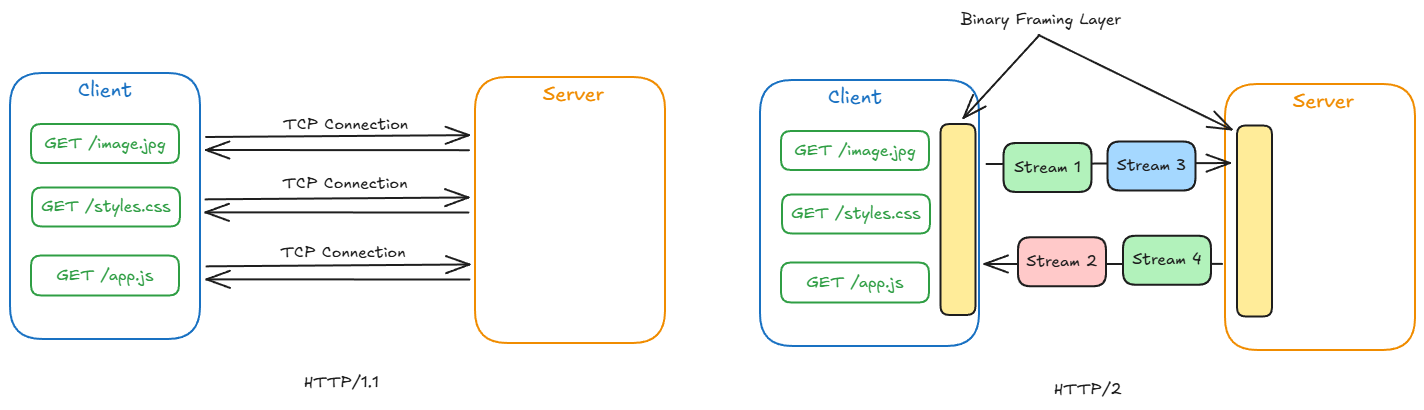

The major goal of HTTP/2 was to reduce latency and address head-of-line blocking at the application layer.

Improvements

Binary Framing: Instead of plain text messages, HTTP/2 splits messages into binary frames.

-

HEADERframes carry the metadata for the request/reponse.- These are encoded using HPACK: Headers are indexed in a dictionary. Instead of sending a repeated header, the browser can send the index and the server will look up the corresponding header.

-

DATAframes contain the actual message.

Multiplexing: Multiple streams (request/response pairs) are interleaved over a single TCP connection.

This avoids the application level head-of-line blocking problem. We can use a single connection with frames from separate streams.

However, we still have TCP-level head-of-line blocking. If a frame is dropped on the response, then all the frames behind it are also not received.

Sources

Original HTTP Memos:

Additional Resources: